Google's SynthID Watermarking Tool: A Powerful Defense Against AI-Generated Image Misinformation

In an era where AI-generated images are virtually indistinguishable from reality, tech giant Google is stepping up to the plate with a groundbreaking solution to combat the proliferation of fake AI images.

The advent of synthetic media, including deepfakes, has blurred the boundaries between authentic and fabricated content, raising concerns about the dissemination of false information. Enter Google's innovative tool, SynthID, designed to tackle this growing issue head-on.

SynthID's Stealthy Watermarking Technology

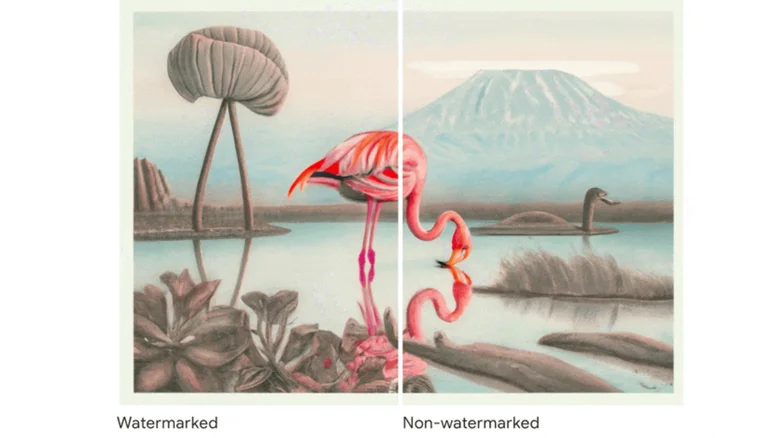

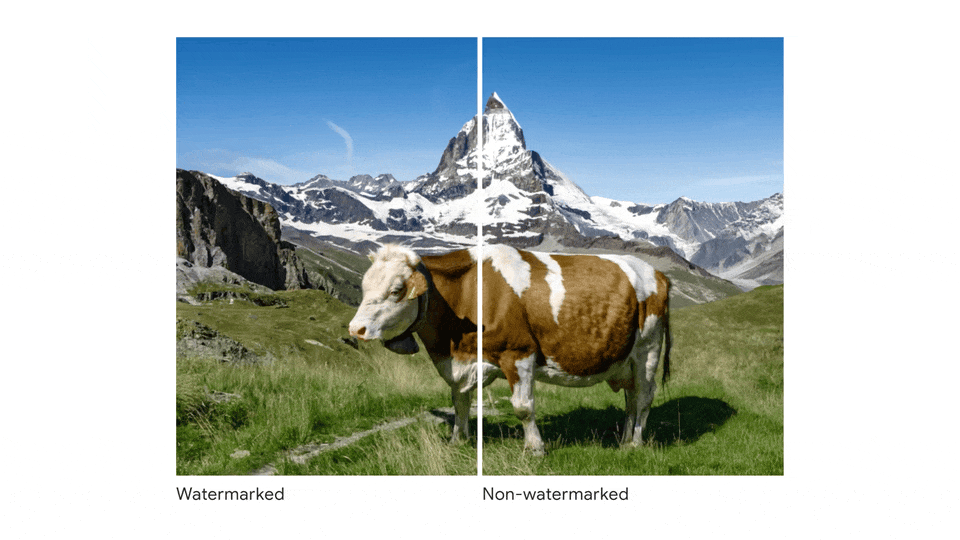

SynthID, developed by Google's AI subsidiary Deepmind in partnership with Google Cloud, introduces a digital watermark directly into images. What sets SynthID apart is that this watermark is entirely invisible to the human eye, making it immune to conventional tampering attempts.

Instead, it relies on specialized computer algorithms trained to detect this hidden signature, thus rendering it highly resistant to manipulation. This technological leap represents a significant stride towards regulating the spread of fake images and slowing the dissemination of disinformation.

Revolutionizing Image Authentication

Traditionally, AI-generated images, popularly known as deepfakes, were marked with visible logos or text metadata to indicate their origin.

Unfortunately, these markings were easy to remove or edit out, rendering them ineffective in combating misinformation. Google's SynthID addresses this vulnerability by embedding an undetectable watermark, offering a formidable defense against image manipulation.

SynthID's Limited Availability and Future Aspirations

However, it's important to note that SynthID's current functionality is limited. It is available exclusively to select paying customers of Google's cloud computing services, and it is not mandatory for users to adopt it, as Google considers it an experimental tool.

The long-term objective is to establish a system where the majority of AI-generated images can be readily identified through embedded watermarks.

Deepfake Concerns - A Threat to Truth and Unity

The rapid advancement of deepfake technology has ignited concerns among politicians, researchers, and journalists, as it blurs the lines between reality and falsehood in the online realm. This trend can exacerbate existing political divisions and hinder the dissemination of accurate information.

Watermarking technology is a key strategy that tech companies are embracing to address this challenge, with Microsoft even forming a coalition to develop a standardized approach for watermarking AI-generated images.

Conclusion

Google's SynthID watermarking tool marks a significant milestone in the quest to regulate AI-generated images and counter the spread of disinformation.

However, this journey necessitates continued research and collaboration among tech companies, media entities, and social media platforms. As AI technology evolves, the battle against fake images must also evolve to safeguard the integrity of online content.